AI has been revolutionizing every industry for the last decade, and the test automation industry is no different. The Autify ML team explores the cutting AI research and how that can be used to accelerate test automation. In this blog post, we are going to see how Autify is already using the latest developments in AI for some of its current and upcoming features.

MLUI (Mobile)

When interacting with an app, the user only sees and interacts with the screen (not the source code). However, the majority of the test automation tools rely upon interacting with the app’s source code to generate and execute test scenarios. We want to provide our customers with test systems that are similar to how humans (QAs) test applications. And this is the motivation behind MLUI.

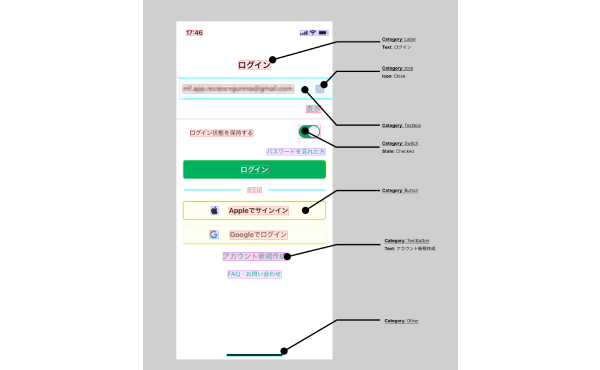

MLUI (acronym of Machine Learning-based UI extraction) is one of Autify’s flagship AI projects. It uses state-of-the-art deep learning techniques to extract UI element information purely from the screenshots. This allows us to provide our customers with more human-like test automation.

Imagine a test scenario where a button becomes hidden due to a mistake in the code. Since the button still exists, an automation tool might still be able to click that button. However, MLUI only looks at the screenshot of the app. So, if a button is not visible, the MLUI-based testing would not be able to locate it and thus test would fail.