TL;DR: AI functional testing uses machine learning and natural language processing to create, execute, and maintain tests that verify whether your software does what it’s supposed to do. It doesn't replace QA thinking, but it removes the grunt work that comes with constant maintenance. This guide covers what AI functional testing actually looks like in practice, how it compares to traditional approaches, and what to consider before adopting a tool.

We all have been in those iffy situations where an extensively used test suite now has a bunch of selector errors. Not much has changed. The test suite probably still covers the same user flows.

The assertions also haven’t changed, but because a developer swapped a div for a section and renamed a handful of CSS classes, dozens of automated tests collapsed like a house of cards.

If that story sounds familiar, you're probably already wondering if there is a better way. There is, though it comes with some nuance worth understanding before you dive in. This is exactly what the article will help with.

What Is AI Functional Testing?

Functional testing checks whether your application behaves correctly from a user’s perspective. Let’s use an example to understand this.

From a user’s perspective, certain things matter: things like login flow working, the shopping cart calculating the total accurately, or the user being able to complete the checkout.

This is exactly what functional testing would entail. It's concerned with what the software does, not how the code is structured underneath.

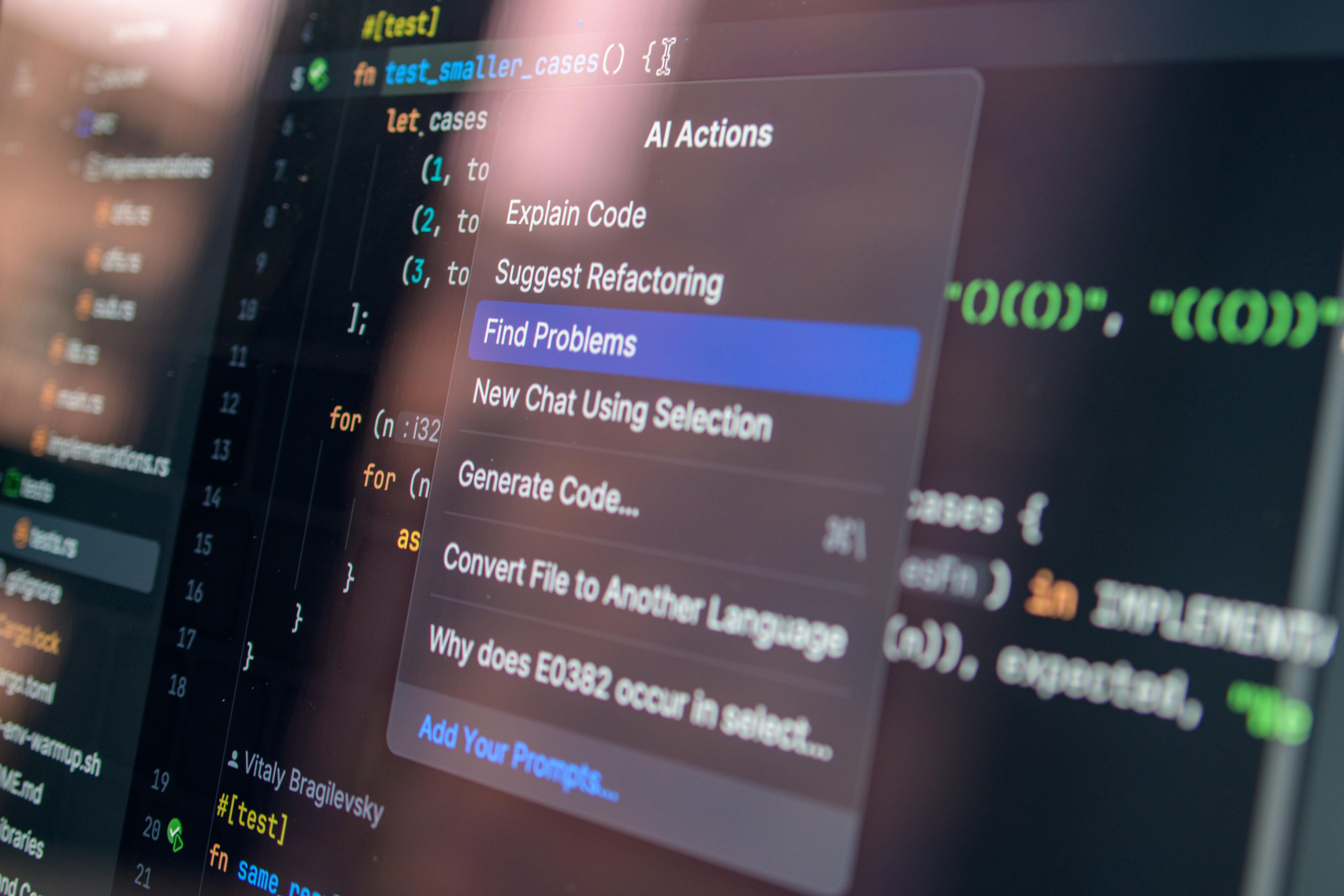

AI functional testing applies machine learning, computer vision, and natural language understanding to that process.

Instead of writing brittle scripts tied to specific DOM elements, you can describe what you want to test in plain English—something like "add an item to the cart and verify the total updates." The AI figures out how to interact with the application, evaluates the outcome, and reports back.

This is an important shift. Traditional test automation asks you to be both a QA thinker and a scripting technician. AI functional testing tries to separate those concerns, letting you focus on the thinking.

The AI interprets your intent and interacts with the application the way a person would, using visual recognition and contextual understanding to find elements, click buttons, and fill out forms.

How Does It Actually Work?

The mechanics vary by tool, but the general pattern looks something like this: you begin by describing the tests in natural language.

There are no programming paradigms such as XPath expressions, CSS selectors, etc.—it’s just simply what a user would do and what should happen when they do it.

The AI interprets your intent and interacts with the application the way a person would, using visual recognition and contextual understanding to find elements, click buttons, and fill out forms.

Now, pass or fail in these tests also depend on the assertions you define, again, in plain natural language. Think something like "the confirmation page should display the order number," rather than checking a specific <span> tag for a string match.

The AI evaluates whether the actual outcome matches your expectation, and when things fail, you get evidence in the form of screenshots, step-by-step logs, and plain-language explanations of what happened and why.

This ability to debug matters more than it sounds. These AI-generated test reports make a lot of conversations crisp and smooth. And here’s the important bit in all of this: you're still the one deciding what to test and what counts as correct. The tool only handles the execution.

AI Functional Testing vs. Traditional Automation

It's tempting to frame functional testing vs. traditional automation as "old way bad, new way good," but the reality is more gradient than binary.

Traditional testing frameworks give you precise, granular control over every interaction. You know exactly which element is being targeted, exactly what assertion is running, and exactly where to look when something breaks.

That control matters for certain edge cases, and for teams with deep automation expertise, it can feel like a perfectly fine setup—until the UI changes and thirty tests shatter overnight.

The core difference is how each approach handles fragility. Traditional tools rely on selectors, such as IDs, class names, and XPath, that are tightly coupled to the DOM. If you change the markup, the tests get changed as well, even if the application still works the same way.

AI tools use visual and contextual matching instead. A button is still a button even if the developer wraps it in a different container, renames its class, or moves it twenty pixels to the right.

There's also the accessibility angle. Traditional automation requires programming knowledge. Someone has to write and maintain those scripts.

AI functional testing opens the door to QA analysts, product managers, and manual testers who understand the application deeply but don't write code.

AI functional testing opens the door to QA analysts, product managers, and manual testers who understand the application deeply but don't write code.

How to Get Started

The easiest advice I’d give here is to start small; perhaps, say, with that one regression suite that breaks quite often.

This suite is your pilot because it gives you two things at once: a clear baseline to measure improvement against and immediate relief for your team if the approach works. And if it doesn't work well for those tests, you'll learn that quickly too.

Autify's Aximo is worth looking at as a starting point. You write test steps in plain English, define assertions in natural language, and run everything across web, mobile, and desktop from a single agent.

What might be genuinely useful is how Aximo builds context about your application over time. The more tests you run, the better it understands your app's structure and expected behavior.

You’d notice that early runs might need more explicit assertions, but later runs get noticeably sharper on their own. The detailed logs with screenshots and AI-generated explanations also make failed test reviews less painful for everyone.

Once you've picked your pilot suite, resist the urge to migrate everything at once. Run the AI tests in parallel with your existing automation for a few cycles.

Compare coverage, compare maintenance effort, and compare how long it takes someone new on the team to understand what each test does. Those comparisons will tell you more than any vendor demo ever could.

Best Practices for AI Functional Testing

The biggest mistake I see teams make is treating AI testing like a strategy replacement rather than an execution upgrade.

AI changes how you run tests, not whether you need a coherent plan for testing. Risk-based testing, boundary analysis, and equivalence partitioning—all are still relevant.

But the single biggest lever you have for test quality is writing clear, specific assertions. The more precise you are about what "correct" means, the more useful your test results become.

Use the reporting as a collaboration tool, not just as a debugging artifact. One underappreciated benefit of AI-generated test logs is that non-technical stakeholders can actually read them.

Screenshots with plain-language explanations of what happened and why something failed can be used by the entire team during reviews, incident postmortems, or sign-offs.

Lastly, always keep humans in the loop for judgment calls.

AI can tell you whether the checkout flow was completed and the confirmation appeared. But it’s less reliable at telling you whether the confirmation felt intuitive to the user. Exploratory testing still needs human intuition, and it probably will for a while.

AI changes how you run tests, not whether you need a coherent plan for testing.

What to Look For in an AI Testing Tool

The things that separate useful from gimmicky tend to come down to a handful of capabilities. You want natural language test creation where you're writing intent, not dictating clicks.

You want visual and contextual element recognition so tests survive UI changes without manual intervention.

Cross-platform support matters if your app runs on more than just the web because maintaining separate toolchains for mobile and desktop is the kind of overhead AI testing should be eliminating, not reproducing.

You should also look at the test results the tool produces. Good AI testing tools produce evidence: screenshots, step-by-step narratives, and clear explanations of failures, which non-engineers can process as well.

A tool that starts from zero every time you run a test is doing pattern matching, not intelligence. So pay attention to what your tool learns.

Tools like Aximo that build progressively deeper context about your application, such as its structure, its expected states, its common patterns, etc., deliver compounding value the longer you use them.

FAQ

How Do You Use AI in Functional Testing?

To use AI in functional testing, you describe test scenarios and expected outcomes in natural language. The AI interacts with your application and then evaluates whether the results match your assertions, so you also define what “correct” looks like.

Can AI Functional Testing Replace Manual QA?

AI functional testing can’t replace QA entirely, and it probably shouldn't. AI handles repetitive regression checks efficiently, but exploratory testing, usability judgment, and edge-case creativity still benefit from human testers. Think of this as handling the predictable so your team can handle the unpredictable.

How Does AI Handle Test Maintenance?

AI functional testing tools use visual and contextual recognition rather than hardcoded selectors. When a button moves or a class name changes, the AI still identifies the element by what it looks like and what it does, exactly like how a human tester wouldn't be thrown by a redesigned page if the workflow stayed the same.

.svg)