Even minor bugs undermine the user’s confidence.’ Quality cannot be compromised. Automating tests with a low-cost, codeless solution to accelerate operation

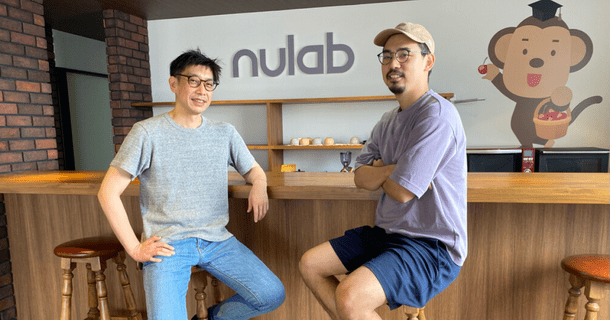

CTO Yuta Tokitake & QA (Quality Assurance) engineer Aya Kawasaki

LegalForce Inc. provides “LegalForce”, which is a cloud platform that reviews contracts. Their mission is to “make all contract risks controllable.” Founded in 2017 by two lawyers, it is a fast-growing startup in legal tech.

LegalForce platform’s AI quickly checks and points out any disadvantageous or missing clauses in the user’s contract. It even suggests sentence improvements.

By automating tests with Autify, they have created new operational rules, and new functions are being developed faster. We interviewed Mr. Yuta Tokitake, director and CTO who joined the company as the first engineer, and Ms. Aya Kawasaki, a QA (Quality Assurance) engineer.

Degradation verification slipped through the cracks as more functions were developed

— What challenges did you face before introducing Autify?

Yuta: We didn’t have any dedicated QA staff at the time. The development engineer was in charge of the whole process, all the way up to E2E testing. They could reflect the development stage’s intentions in the verification process, so there were some pros to this. However, as the development workload increased, it became increasingly difficult to maintain quality while making new releases quickly.

Although the developer could manage the QA of the function under development, we started noticing degradation. In other words, existing functions were being affected by new ones, and the developer couldn’t handle that along with their existing workload. As the number of functions increased, the workload for dealing with degradation also increased. We couldn’t handle it all.

Verify degradation before each release was becoming difficult. There was even an instance where we only noticed an issue after a user pointed it out. At this point, we thought that automating tests would reduce the QA workload in the medium to long term. We took the plunge out of desperation.

— Did you consider other methods of automation, such as Selenium, before considering Autify?

Yuta: Yes, we tried Selenium, but we wouldn’t have been able to implement given the amount of developer resource we had at that time. We eventually just used it for verification and didn’t end up implementing it. If you were to develop a testing system using Selenium, engineers would have to maintain test cases. Workload for maintenance and operation would increase. In the end, we decided that it wasn’t realistic, given how time-consuming it would be to develop and test.

— Is cross-browser verification important to you?

Keisuke: Yes, it is. Our service is targeted towards businesses, so about 30% of our clients use Internet Explorer (IE). As you can imagine, we need to verify on both Chrome and IE. When we manually tested in those browsers, sometimes there were issues only present on IE. It was a pain!

— I understand what you mean!

Give confidence to users by combining manual and automated testing

— I hear that some fast-growing startups can’t manage testing. It seems like your company is aware of how important testing is.

Yuta: I think QA is the most important process for continuously providing high-quality products. We deal with contracts, so the stakes are high. It would be a disastrous situation if there’s a data breach or if other users can see another client’s data. We believe it’s very important to secure data and to provide a service that can be used with complete confidence.

We do our best to ensure that there aren’t issues like that, of course. But bugs do occur. An engineer who understands the backend may not think it’s a significant issue, but a user may doubt whether our service is reliable. This is why overall quality is something we can never compromise.

But as I mentioned earlier, it would be too time-consuming to test it all manually. We started automating any degradation/scenario checks as much as possible. Our staff handles anything that can only be done manually. In that way, we can maintain quality while keeping the cost as low as possible. Knowing this, we had a positive attitude towards automation, even in the initial stage.

— What was the process like up to test automation?

Yuta: Last year, Mr. Chikazawa (CEO of Autify, Inc.) came to our company for a hands-on training session. All of our developers attended it. I remember being very impressed by how easy it was to create test cases by interacting with pages. I was also fascinated that Autify uses AI in its test automation software.

In the past, you had to write some code and then compare the code with actions. It was shocking how easy it is to create a scenario by just clicking the page like you normally do.

Ms. Kawasaki, a new staff, created a comprehensive scenario for the entire service, which became the basis for our rules, and we took the first step towards implementation.

Having multiple test cases saves time

— Did you have any creative ideas when you implemented Autify?

Aya: When creating test cases on Autify, we assign IDs to each function as if they’re tags. This comes in handy when managing all the test cases. It allows us to quickly understand what the scenario is checking.

My predecessor prepared a Google spreadsheet and numbered it by function, such as 01-001. We gradually improved it by adding links to scenarios and descriptions on each function, such as what it can/cannot do. It’s really helpful for managing it all. Autify can’t handle the design portion at the moment, so we leave a note and test manually.

We used to test the production environment only, but we recently set Autify up to perform the whole test in the test environment. This comes in handy when we want to run a final check before release. The QA engineer generally creates the Test Scenario, but we’ve created a guide so that the development engineer can do it too. We’ve set things up so that the whole team can operate it.

— Development engineers are involved with testing too?

Yuta: That’s right. At first, we had prepared actions that were slightly time-consuming, such as uploading a file. But people who don’t usually create scenarios couldn’t easily understand it. I realized that it was wasting time.

There are times when you want to run the entire scenario and times when you want to pick a specific function. We’ve prepared it so that there is a Test Plan for checking the entire scenario and one for checking a specific portion of the scenario.

Combining creativity and Autify's functions for smooth implementation

— It’s great that you’ve got it all organized. What does your QA team look like now?

Aya: There is two QA staff, including myself. (At the time of the interview)

Yuta: I would like to hire more staff for the QA team and work on visual regression tests using Autify. There are still many things we can do, including improving QA.

— The visual regression function is coming soon. I’m glad you’ll be using it when it is released.

Yuta: We are working on many UI fixes, so when there’s a major fix, the platform’s UI changes drastically. It would be great if we could maintain the visual side when we work on minor fixes. We ask the designer if there are any issues with the display every time we make a fix, but ideally, I’d like to automate that too. It would make it easier to make minor modifications such as refactoring (organizing the source code) while maintaining the visuals.

— Are there any other creative ideas you’ve come up with for implementation?

Aya: We’ve had some original ideas to run the same scenario in the production environment and the test environment. For example, when checking the URL, we’ve adjusted it so that you can check whether something is included in the URL and that you’re on a specific page. This utilizes Autify’s verification item for the assertion “Confirm that XX is included.”

Also, we’ve used Autify’s Step Group function to manage login privileges. We have one scenario for administrator privileges and another for member privileges.

Verification process, which used to take 4-5 hours, reduced to 1 hour by parallel execution

— You have created an excellent system! What changes did you see since introducing Autify regarding the issues you had before?

Yuta: We used to have many issues such as not noticing degradation, and this resulted in a lot of degradation falling through the cracks. With Autify, there was a clear reduction in those issues. Degradation used to happen weekly, but now it only happens once a month. I think it has been very effective.

Aya: We used to only use the production environment. By running the verification process on the test environment as well, we can carry out a system test before release and a degradation verification after release. It was well-received by engineers.

Even people who can’t write code can create scenarios on Autify, and QA engineers can directly edit scenarios. This has dramatically reduced communication costs for verification because QA engineers can easily adjust or add parts of the scenario based on their QA perspective.

QA engineers generally manage test scenarios since development engineers are busy with development and don’t have time to create them. However, by making it possible to execute Autify scenarios even in a test environment, engineers have more opportunities to interact with Autify. I think we’ve managed to create a system where the developer team can run any test anytime.

Also, this is not a change directly resulting from implementing Autify, but I recently started using Autify’s parallel execution function. Scenario execution used to take 4-5 hours, but now it takes only about an hour. We execute scenarios daily and weekly. More specifically, we run 13 scenarios daily and 20 scenarios weekly. When it comes to checking the overall regression, there’s a considerable amount to check. Parallel execution has significantly reduced the amount of time it takes for that process, and it’s been incredibly useful.

Create a better system by incorporating test cases at the specification stage

— What are your plans for quality control?

Aya: There are some minor tests that we haven’t been able to automate yet. For example, we still manually test LegalForce’s Word file review function. We are planning on automating a wider range of features in the future.

Yuta: Regarding implementation rules, I would like to continue building more sophisticated tests, including the visual regression I mentioned earlier. It would be great if we could use Autify as a final check method and create test cases when we have the specifications for new functions. This is based on the concept of shift left. We can further accelerate operation if we can establish a system that identifies which scenario to check, implements it, and tests. Executing the right test scenario would prevent many issues down the line.

— You can create scenarios quickly, and by running tests first, you can identify bugs early on.

Yuta: Exactly. If we can create a scenario while successfully integrating the development engineer’s and the QA engineer’s perspective, we’ll be able to perform more robust tests. That’s where I’d like to make improvements.

Autify is a silver bullet for development teams who don't have the resources for test automation

Do you have any advice for those who are planning on working with test automation?

Yuta: Front-end E2E tests are expensive, and the effects are not always apparent. There are other issues, such as needing an engineer to implement it, and constructing the platform can be difficult.

I think larger companies can hire SETs (Software Engineer in Test) and automate on a large scale, but it was difficult for a company of our size. I think many development teams want to automate E2E testing but can’t because they don’t have the time or resources for creating test cases, operating them, and building the infrastructure. Autify is the silver bullet that solves those issues. I used to think there’s no such thing as a silver bullet, but I was wrong!

By implementing Autify at LegalForce, I learned that the key to success was to create rules that work for the team. Once the operational rules were established, productivity skyrocketed. We’ve been able to create a system where we can improve the quality of our products.

Aya: I won’t deny that it does initially take some time to create scenarios to verify functions. But once those are set up, you can test at any time, in any environment. I think Autify is especially useful for companies where there are few QA engineers and development engineers are too busy to handle everything.

Also, I love that the support staff is available whenever we have questions about scenario creation or test results. I think the customer success team gave us the confidence to introduce Autify.

— We are working to ensure smooth automation not only through providing tools but also by offering support by actual humans, so I’m glad to hear that’s been useful. Thank you very much. Do you have anything else you’d like to add?

Yuta: We currently have two staff in our QA team, but there is so much more for us to do. If you are interested in quality assurance and test automation, please check out our Careers page. We’re looking forward to hearing from enthusiastic individuals who are keen to create an innovative QA organization.