What is Regression Testing?

To begin, we should start by establishing what a regression is. A regression is, in software terms, an unwanted or unintended malfunction (bug) in a feature of a software which formerly worked as expected. This type of bug is normally caused by a systematic error in the software’s design, or at a source code level when software undergoes changes like new features or bug fixes; it is, hence, a human error.

Suppose we add a new feature to our application –like a new item in the Options menu which opens a dialog for extra configurations, but that new feature somehow enters in conflict with the Tools menu, making it invisible or inaccessible, or, being accessible, causes the application to crash every time we try to open it. Imagine we solve that bug –we can now access the Tools menu again, only this time it’s the very Options menu who’s not responding. Again, we try to fix that, but the Tools menu bug comes back –and so on, and so on. That, roughly –and clumsily– speaking, is what’s called a regression. It’s an emergence or re-emergence of bugs.

So, when we say we perform regression testing, what we actually mean is we’re testing our software to verify the new features or fixes don’t conflict with its previous stable state, that is, that our software doesn’t suffer a regression.

Poor revision controls, bad coding practices, insufficient testing, among other negligent activities, are all potential triggers for software errors.

Regression test routines can be performed manually and/or automated and should be performed frequently, especially before a code release.

Performing Regression Testing

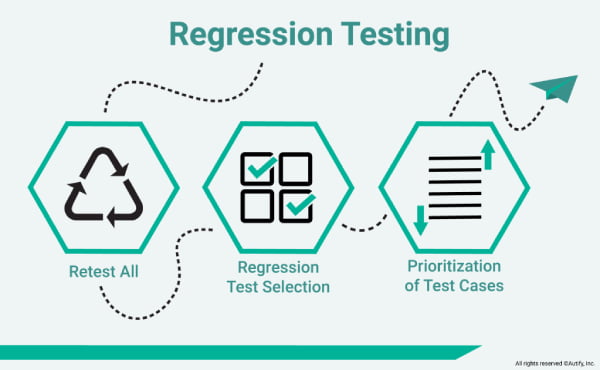

After an appropriate debugging/fixing phase, we can start doing regression testing. We will select the most relevant cases –usually the ones which require re-testing, based on the module or component of the software where changes have been applied. Obsolete test cases will be discarded, so we will refine the test case selection according to certain characteristics which will make them reusable: cases with a high frequency of errors, cases which verify the correct functionality of a software, cases which present features visible to the user, cases which have undergone recent changes at source code level, former successfully run cases, cases which have been known to fail in early testing stages, among other. In this way we will establish which set of tests will be the smoke tests and which the sanity tests.

After performing a proper exploratory testing and estimating the necessary time to execute the regression tests, we will follow by identifying candidates for test automation. Prioritization takes place right after this. High priority test cases will be executed first, since they imply core functionalities which could cause critical errors, while mid and low priority cases will be executed last. We’re ready at this stage to execute our test case suite.

Regression Testing Techniques

As some may know, there are several technical approaches to regression testing. Here’s a varied –though not necessarily complete– list:

- Corrective, which is usually done when no changes in the code are made, hence implying less run time.

- Retest-all, which is used when minor changes are made in the code, but covers all scenarios and therefore is time-consuming.

- Selective, focuses on only selected cases of a certain module which may have experienced changes in its code. It’s less time-consuming and facilitates testing of both current and new code simultaneously.

- Progressive, also used when minor changes are made in the code. It facilitates testing an updated version of the product without affecting its current features. This does for quite complex configuration and test preconditions.

- Complete, usually done when the code undergoes many changes and no other changes are intended. As it is very effective in finding bugs within a short time span, it is the previous step before the first use can be made by the end user.

- Partial, implies a selection of specific, related modules that are prone to have been affected by changes in the code. It results in saving time when checking for bugs, without the added hindrance to the code.

- Unit, a pillar of the testing process, it focuses on a single unit of code, isolating it from the rest, everytime the changes made in the code are completed. It clears the way for the process planning, especially in a Test Driven Development paradigm.

Automating Regression Tests

Naturally, regression tests are a time and resource intensive process. A QA team will usually need to run [a lot] of test cases in order to cover the most crucial components of the software potentially affected by new changes. That said, it would be just common sense to think regression tests are a natural candidate for automation.

The possibilities with automation are enormous, compared to manual testing. By using automated test scripts which can be written in a variety of programming languages like Java, JavaScript, Python, etc, QA testers can parametrize functions, locate and map elements, do assertions, debug errors, get analysis reports and more, with notable speed and efficiency. After the test preparation is ready, the tester can just execute it, sit back for a minute and watch the tool do it all, without one click. By implementing certain design patterns, testers can also adapt or scale their testware without necessarily changing any of its core scripts.

Best Practices for Regression Testing

- Staying up to date with regression suites: keeping up with changes in testing priorities is key.

- Staying up to date on new code changes: fluid communication between devs and the whole QA team.

- Prioritizing test cases: through careful impact assessment and attention to requirements, establish order in the midst of chaos.

- Grasping the scope of our tests: a good plan starts by first getting certain facts right. We need to know what our project will encompass, considering time and goals.

- Selecting the right cases for automation: by knowing what to automate, you optimize automation and maximize the testing project in general.

- ROI assessment: Considering when to do test case selection or do a retest all. In the case of automation there are intrinsically notable benefits concerning ROI:

- Cost and time savings (less man hours/time to market)

- Repeatability (consistent execution)

- Traceability (precise track record of execution)

- Availability (automated tests can be virtually run non-stop)

Automating Regression Tests with Autify

Features which make Autify an intelligent choice for your regression tests:

- A SaaS delivery model.

- A no code/low code platform. No programming knowledge needed.

- A recording GUI which is ideal for playing back test scenarios.

- Test script maintenance and adaptation to UI changes while alerting the QA team, all via AI algorithms.

- Cross-browser compatibility, including mobile devices, integration with Slack, Jenkins, TestRail, etc.

- Built-in reporting –no third party tools.

- Human tech customer support.

If you do a brief search online you will notice there are a lot of potential candidates to suit our toolbox. Nonetheless, when it comes to pricing, most of them aren’t exactly what we would deem straightforward. Besides, real, comprehensive tech customer support is a value that differentiates a good tool/service from an average one. That’s a thing to keep in mind.

At Autify we take such things in high account, because client success is cause and effect of our success.

We invite you to check these amazing customer stories:

- How Did Asoview Automate 200 Scenarios? ‘It Really Is a No-Code Testing Solution for Everyone!’

- Escaping the downward spiral of regression with Autify: from 40 hours of testing to zero using codeless test automation.

You can see other of our client’s success stories here: https://autify.com/why-autify

- Autify is positioned to become the leader in Automation Testing Tools.

- We got 10M in Series A in Oct 2021, and are growing super fast.

As said before, transparent pricing is key to our business philosophy.

At Autify we have different pricing options available in our plans: https://autify.com/pricing

- Small (Free Trial). Offers 400~ test runs per month, 30 days of monthly test runs on 1 workspace.

- Advance. Offers 1000~ test runs per month, 90~ days of monthly test runs on 1~ workspace.

- Enterprise. Offers Custom test runs per month, custom days of monthly test runs and 2~ workspaces.

All plans invariably offer unlimited testing of apps and number of users.

We sincerely encourage you to request for our Free Trial and our Demo for both Web and Mobile products.