What is Regression after all?

It’s very common to talk about regression testing without actually defining the concept behind regression testing.

A regression, in terms of software development, means an application or piece of software unintentionally regressed to a previous, unwanted state. For example, let’s suppose we add a new feature to our software application –like a new item in the Options menu which opens a dialog for extra configurations, but that new feature somehow enters in conflict with the Tools menu, making it invisible or inaccessible, or, being accessible, causes the application to crash every time we try to open it. Imagine we solve that bug –we can now access the Tools menu again, only this time it’s the very Options menu who’s not responding. Again, we try to fix that, but the Tools menu bug comes back –and so on, and so on. That, in a quite pathetically dramatized explanation, is what’s called a regression.

Automating a Regression Test

Naturally, regression tests are a time and resource intensive process. A QA team will usually need to run [a lot] of test cases in order to cover the most crucial components of the software potentially affected by new changes. That said, it would be just common sense to think regression tests are a natural candidate for automation.

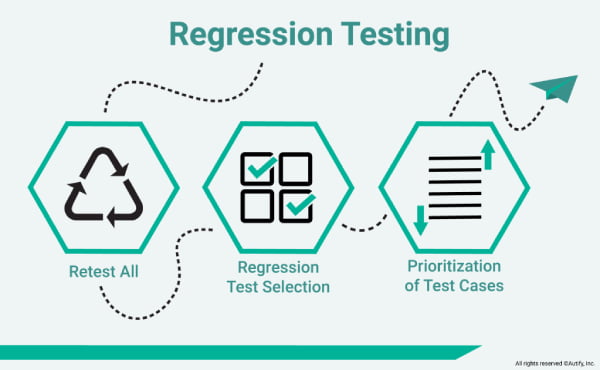

As some may already know, there are several types of regression testing:

- Corrective, usually done when no changes in the code are made, hence implying less run time.

- Retest-all, used when minor changes are made in the code, but covers all scenarios and therefore is time-consuming.

- Selective, focuses on only selected cases of a certain module which may have experienced changes in its code. It’s less time-consuming and facilitates testing of both current and new code simultaneously.

- Progressive, used when minor changes are made in the code. It facilitates testing an updated version of the product without affecting its current features. This does for quite complex configuration and test preconditions.

- Complete, usually done when the code undergoes many changes and no other changes are intended. As it is very effective in finding bugs within a short time span, it is the previous step before the first use can be made by the end user.

- Partial, implies a selection of specific, related modules that are prone to have been affected by changes in the code. It results in saving time when checking for bugs, without the added hindrance to the code.

- Unit, a pillar of the testing process, it focuses on a single unit of code, isolating it from the rest, everytime the changes made in the code are completed. It clears the way for the process planning, especially in a Test Driven Development paradigm.

The possibilities with automation are enormous, compared to manual testing. By using automated test scripts which can be written in a variety of programming languages like Java, JavaScript, Python, etc, QA testers can parametrize functions, locate and map elements, do assertions, debug errors, get analysis reports and more, with notable speed and efficiency. After the test preparation is ready, the tester can just execute it, sit back for a minute and watch the tool do it all, without one click. By implementing certain design patterns, testers can also adapt or scale their testware without necessarily changing any of its core scripts.

However, all this implies a tester actually knows programming.

Adapting [to today]

We propose another paradigm. One in which the tester can do more with less.

Picture this; instead of having to go through all the preparation phase, our tool can identify and locate elements in a user interface. Instead of having to modify certain classes for the testware to adapt to changes in the application code, our tool can register those changes and immediately adapt the test cases, and so we get rid of many boring, time-consuming maintenance chores. All this in an autonomous way. Sounds good, doesn’t it?

The web evolves at an unrelenting pace, that’s why tools must keep up in order to be reliable and efficient.

Autify offers:

- A SaaS delivery model.

- A no code/low code platform.

- A recording GUI which is ideal for playing back test scenarios.

- Test script maintenance and adaptation to UI changes while alerting the QA team, all via AI algorithms.

- Cross-browser compatibility, including mobile devices, integration with Slack, Jenkins, TestRail, etc.

- Built-in reporting –no third party tools.

- Human tech customer support.

Might sound like a no-brainer. But there’s more.

Clients first

If we do a brief search online we will notice there are a lot of potential candidates to suit our toolbox. Nonetheless, when it comes to pricing, most of them aren’t exactly what we would deem straightforward. Besides, real, comprehensive tech customer support is a value that differentiates a good tool/service from an average one. That’s a thing to keep in mind.

At Autify we take such things in high account, because client success is cause and effect of our success.

We invite you to check these amazing customer stories:

- How Did Asoview Automate 200 Scenarios? ‘It Really Is a No-Code Testing Solution for Everyone!’

- Escaping the downward spiral of regression with Autify: from 40 hours of testing to zero using codeless test automation.

You can see other of our client’s success stories here: https://autify.com/why-autify

- Autify is positioned to become the leader in Automation Testing Tools.

- We got 10M in Series A in Oct 2021, and are growing super fast.

As said before, transparent pricing is key to our business philosophy.

At Autify we have different pricing options available in our plans: https://autify.com/pricing

- Small (Free Trial). Offers 400~ test runs per month, 30 days of monthly test runs on 1 workspace.

- Advance. Offers 1000~ test runs per month, 90~ days of monthly test runs on 1~ workspace.

- Enterprise. Offers Custom test runs per month, custom days of monthly test runs and 2~ workspaces.

All plans invariably offer unlimited testing of apps and number of users.

We sincerely encourage you to request for our Free Trial and our Demo for both Web and Mobile products.